-

A Tour of NUS Engineering’s student-led vehicle projects – Formula SAE, RoboMaster, Mars Rover, and Bumblebee

Last week I had the rare opportunity to get an inside tour of the various student-led “vehicle” projects being built […]

-

A Tour of PIXEL

A tour of IMDA’s PIXEL Incubation and Innovation space for Singaporean startups & independent digital content creators.

-

Memory Portals: The process behind the work

Until this year, I’ve never had a work on the banks of the Singapore River. So when Asian Civilisations Museum […]

-

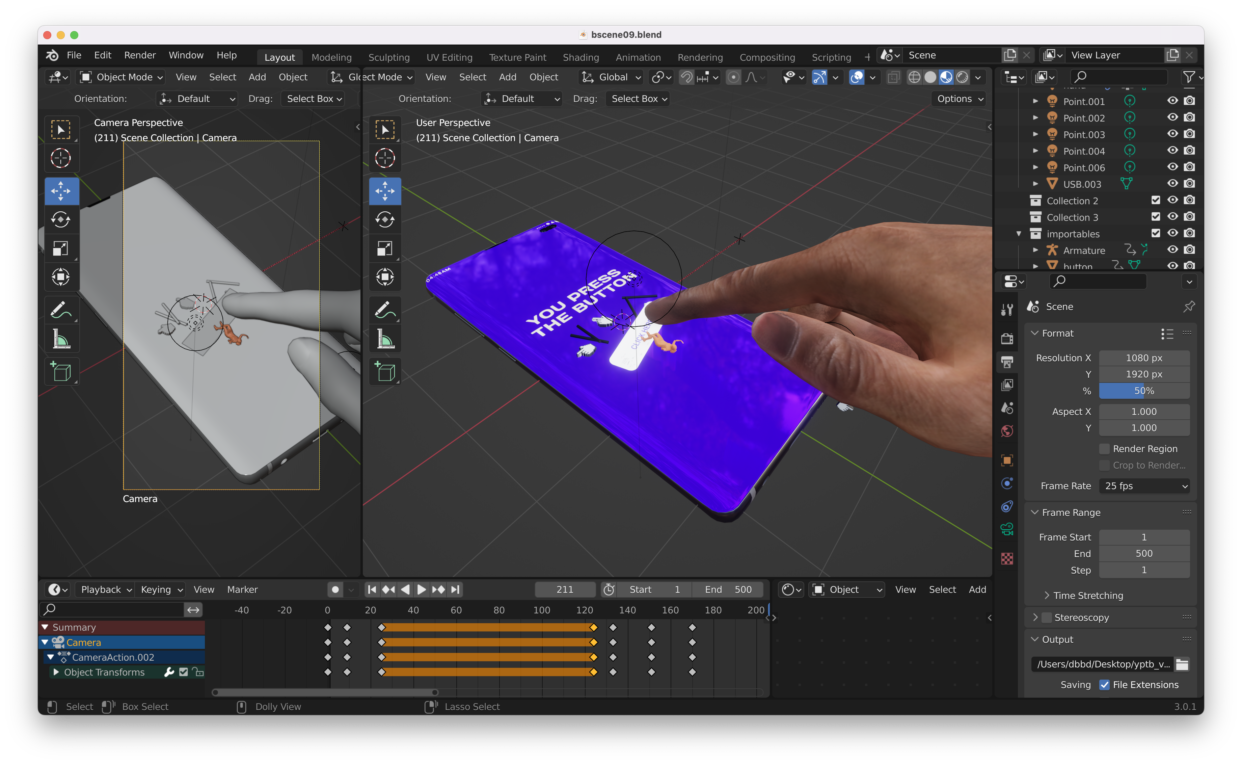

You Press the Button: The process behind the work

Last year, Kristine and Gillian approached me with an interesting opportunity to show my work on a big advertising billboard. […]

-

Artist who makes artworks about Singapore River finally takes boat ride on Singapore River

You would think that as an artist who has made so many works about the Singapore River, that I would […]

-

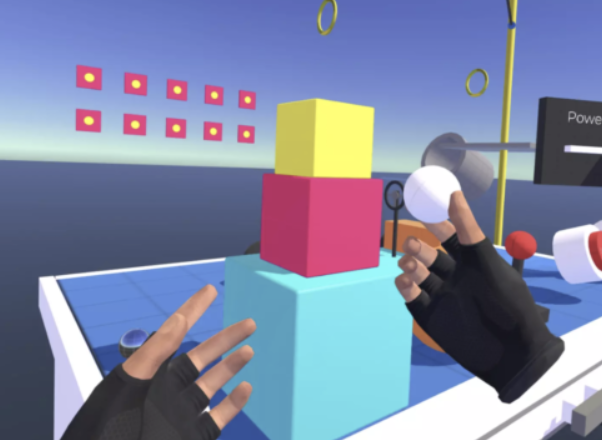

Developing for Oculus Quest 2 in Unity with Macbook Pro 16″

I have some big announcements to make later this year but for the meantime I’ve been toiling away at various […]

-

Attending IEEE VR 2022 for the First Time

I’m attending IEEE VR for the first time! I’m currently not in academia although my day job is as an […]

-

Singapore Art Week 2022: Where to see Debbie’s works

Here’s a roundup of where you can find Debbie’s works during the ever chaotic Singapore Art Week! WELL… I TRIED […]